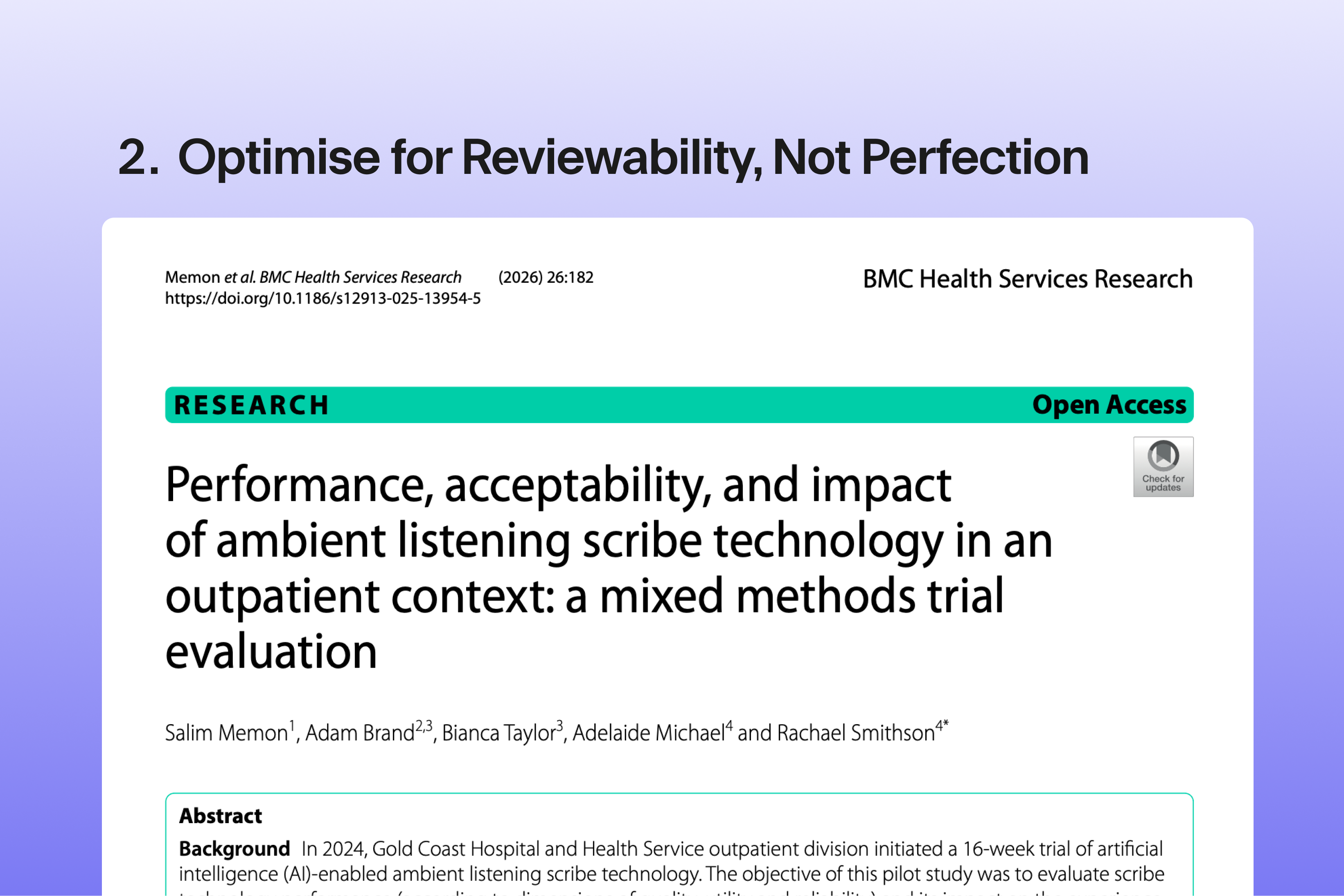

GCHHS Implementation Lessons: Optimise for Reviewability, Not Perfection

From peer-reviewed research on ambient AI in Australian outpatient clinics

This article is part of a series exploring implementation lessons from Gold Coast Hospital and Health Service's 16-week evaluation of ambient documentation across 7,499 consultations. For the full analysis and all implementation lessons, see our complete article.

A draft that supports clinical judgement

Ambient AI documentation is intended as a draft that reduces effort while keeping clinician judgement firmly in the loop. In the GCHHS evaluation, 58% of outputs were accepted without modification on average; in other cases, clinicians amended before finalising.

The goal: faster reviews, not zero edits

Clinicians edited an average of 42% of ambient-generated content before finalising notes. This is not a limitation. It's how clinical practice manages any tool supporting decision-making:

- Junior doctors draft notes; senior clinicians review and amend

- Decision support suggests diagnoses; clinicians verify

- Templates populate fields; prescribers review for interactions

The goal is to reduce effort, not remove judgement. Good implementations make key details easy to check (medications, numbers, diagnoses, procedures) and corrections frictionless.

What makes review work

The goal isn't zero edits: it's making reviews faster, more reliable, and harder to miss.

Key facts should be easy to verify and easy to correct, especially medications, numbers, diagnoses, procedures, laterality, allergies, and safety-critical negatives.

What good looks like: the tool makes it easy for clinicians to quickly check the details that matter, spot anything that looks off, and amend without friction. Safe review should feel built in, not bolted on.

About this series: This article is part of a series based on independent, peer-reviewed research from Gold Coast Hospital and Health Service. For the complete analysis and all implementation lessons, read our full article.

Continue the conversation: We welcome feedback from clinicians, researchers, and healthcare leaders. Contact our team at clinical@lyrebirdhealth.com

Read the full study: Memon S, Brand A, Taylor B, Michael A, Smithson R. Performance, acceptability, and impact of ambient listening scribe technology in an outpatient context: a mixed methods trial evaluation. BMC Health Serv Res (2025).